Cognee - Build AI memory with a Knowledge Engine that learns

Demo . Docs . Learn More · Join Discord · Join r/AIMemory . Community Plugins & Add-ons

[](https://GitHub.com/topoteretes/cognee/network/) [](https://GitHub.com/topoteretes/cognee/stargazers/) [](https://GitHub.com/topoteretes/cognee/commit/) [](https://github.com/topoteretes/cognee/tags/) [](https://pepy.tech/project/cognee) [](https://github.com/topoteretes/cognee/blob/main/LICENSE) [](https://github.com/topoteretes/cognee/graphs/contributors) Use our knowledge engine to build personalized and dynamic memory for AI Agents.

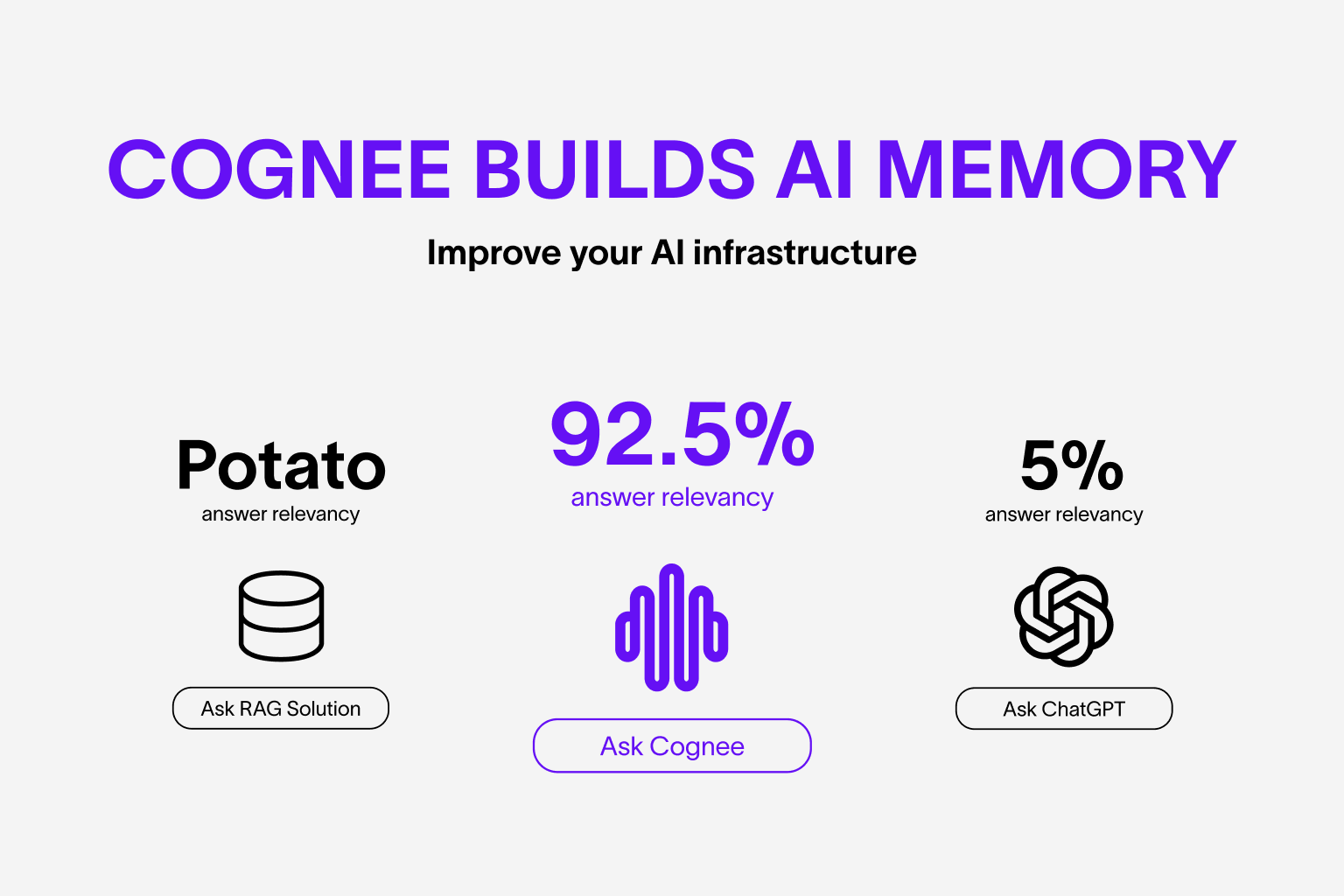

Use our knowledge engine to build personalized and dynamic memory for AI Agents.

🌐 Available Languages : Deutsch | Español | Français | 日本語 | 한국어 | Português | Русский | 中文